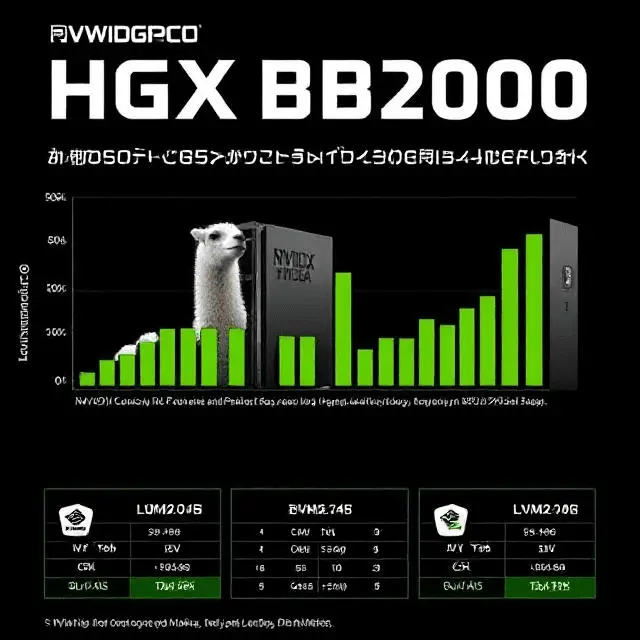

Super Micro Computer has unveiled its latest NVIDIA HGX B200 systems, achieving over three times the tokens per second (Token/s) generation for Llama2-70b and Llama3.1-405b benchmarks compared to the previous H200 8-GPU configurations. This significant performance enhancement underscores Supermicro’s commitment to advancing AI infrastructure.

Enhanced Performance with NVIDIA Blackwell GPUs

The HGX B200 systems are equipped with NVIDIA’s Blackwell architecture-based GPUs, delivering substantial improvements in AI processing capabilities. Supermicro’s configurations have demonstrated over three times the Token/s generation for both Llama2-70b and Llama3.1-405b models when compared to the earlier H200 8-GPU setups. This advancement is crucial for applications requiring rapid and efficient AI inference.

Optimized System Design for AI Workloads

Supermicro’s HGX B200 systems feature a high-speed interconnect of eight Blackwell GPUs via NVIDIA NVLink, providing a bandwidth of 1.8TB/s. This design enhances GPU-to-GPU communication, essential for training large language models (LLMs) like GPT-MoE-1.8T. The systems are also equipped with up to 1.5TB of high-bandwidth memory, facilitating faster data processing and model training.

Comprehensive AI Solutions for Diverse Needs

Beyond the HGX B200 systems, Supermicro offers a range of GPU-optimized solutions tailored for various AI workloads. These include NVIDIA HGX B100 8-GPU systems, 5U/4U PCIe GPU systems with up to 10 GPUs, SuperBlade configurations with up to 20 B100 GPUs, 2U Hyper systems with up to 3 B100 GPUs, and 2U x86 MGX systems with up to 4 B100 GPUs. These options cater to diverse requirements, from enterprise-scale deployments to specialized AI applications.

Strategic Focus on AI Infrastructure

Supermicro’s strategic focus on AI infrastructure is evident in its development of rack-scale plug-and-play liquid-cooled AI SuperClusters. These solutions are optimized for NVIDIA Blackwell GPUs and aim to simplify AI deployment while enhancing performance. The liquid-cooling technology not only reduces energy consumption but also lowers data center total cost of ownership (TCO), making AI operations more cost-effective and sustainable.

Industry Recognition and Future Prospects

The advancements demonstrated by Supermicro’s HGX B200 systems have garnered industry recognition, reinforcing the company’s leadership in AI hardware solutions. As AI applications continue to evolve, Supermicro’s commitment to innovation positions it well to meet the growing demands of AI workloads, offering scalable and efficient solutions for a wide range of applications.